Hi all...

Sorry there's been a bit of a lag between articles. We've been busy trying to get Ganymede out the door and start planning for the future (maintenance releases for 1.6 plus the next major release of DTP for next June). I need to ditch my crystal ball for a magic eight ball I think. :)

Anyway... This week we're going to chat about how to create a new custom driver template.

First of all, when would you want to do this? There are a few possibilities:

- You're creating support for a new database type not currently covered by DTP Enablement.

- You want to add support for a third-party driver (such as DataDirect or jTDS) for a currently supported database.

- You simply want to add an alternative driver template to complement an existing driver that adds properties or changes default values for use in your application(s).

That said, let's pick #1. We can use it as an example to provide functionality through this article series and eventually add some new database support to DTP Enablement.

For this example, let's work on SQLite support.

You can find a ton of information about SQLite on the SQLite home page (

http://www.sqlite.org/). And you can grab the SQLite JDBC driver from the SQLiteJDBC page (

http://www.zentus.com/sqlitejdbc/). So we'll grab the SQLite binaries for Windows (in my case) and the sqlitejdbc-v051-bin.tgz for this case. (You'll need to put the sqlitejdbc.dll in your JRE's or SDK's JRE bin directory to get this working.)

Typically the process I work through when deciding whether or not we need a custom connection profile for a given database is as follows:

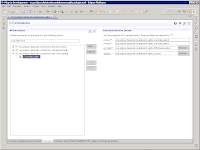

- Can I create a new Generic JDBC driver definition that references the jar (sqlitejdbc-v051-native.jar in this case)? Yes.

- Can I then create a new Generic JDBC connection profile that uses my driver definition from (1) to connect to the database? Yes.

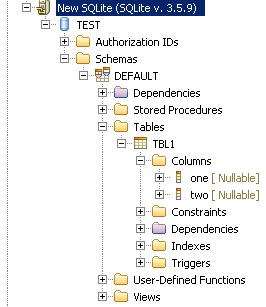

- Can I browse into the database to see schemas, tables, stored procedures, and the like? Unfortunately not in this case.

This means we need to go a step further and go through these stages:

- Stage 1: Create a new Database Definition for our Database

- Stage 2: Create a new Driver Template for our Database (and the associated UI)

- Stage 3: Create a new Connection Profile for our Database

- Stage 4: Create a Custom Catalog Loader for our Database

So let's start with Stage 1 and get Stage 2 started today...

For each database that is supported in DTP and presents its structure in the Data Source Explorer (DSE), we have to tell the base models what the database supports. This is represented by the Database Definition (or "DB Definition"). What data types does it handle? Does it handle aliases or triggers? What kinds of constraints?

The DB Definition file itself is simply an XMI file (an XML file that provides metadata for some other XML files). In this case, it maps back to an EMF model for the DB Definition.

I'm not going to go into the gory details here. But there's a good article on how to get started

here (be sure to look at Scenario 2).

Basically we need to create an XMI file to tell DTP what basic properties this database adheres to. What data types does it support, does it have catalogs, and so on. Most of this information can be found in the database documentation.

To simplify the process a little, we have a sample Java file that can be customized to create a new XMI file. I've modified it somewhat to create the XMI file locally. And I'll post a zip with the necessary files on the DTP website so you can grab them at the end of this exercise.

Once that's created, you can create a plug-in wrapper called "org.eclipse.datatools.enablement.sqlite.dbdefinition". And in that plug-in wrapper we will use the org.eclipse.datatools.connectivity.sqm.core.databaseDefinition extension point to tell DTP about it. Basically the databaseDefinition extension point just maps the XMI file to a named vendor and a named version. That's how the underlying systems will locate it. (You'll see the terms vendor and version appear later as we define our driver template as well.)

So now we have a DB Definition and a plug-in wrapper for it. Cool. Now we can move to the first part of Stage 2: Creating a driver template.

To create a new driver template, we'll start by creating another plug-in. This plug-in will house all the non-UI bits and pieces we want for our SQLite connection profile. We'll call it "org.eclipse.datatools.enablement.sqlite".

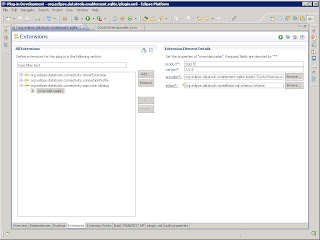

In the manifest for our new SQLite plug-in, we will create a new org.eclipse.datatools.connectivity.driverExtension extension. This extension point is used to register driver template categories and driver templates within the DTP framework.

Remember how we were talking about vendor and version earlier? Well, now we're going to map some driver template categories to them.

First we'll create a "SQLite" category, which maps back to the vendor name we chose earlier and has org.eclipse.datatools.connectivity.db.driverCategory as a parent. All database drivers fall under this category in DTP so we can easily find them. We'll call our new category "SQLite" and give it an ID "org.eclipse.datatools.enablement.sqlite.driver.category".

Next, we'll create a "3.5.9" category, to map to the version we selected earlier (3.5.9 is the most recent version of SQLite I could find). This one will use our "SQLite" category as its parent. We'll call it "3.5.9", and give it an ID "org.eclipse.datatools.enablement.sqlite.3_5_9.category"

Lastly we'll create the driver template itself. We'll give it the name "SQLite JDBC Driver" (not very original, but easy to remember) and an ID "org.eclipse.datatools.enablement.sqlite.3_5_9.driver". We'll set it to our SQLite 3.5.9 parent category so it has some context, setting it to the category ID we made a second ago "org.eclipse.datatools.enablement.sqlite.3_5_9.category".

We know it needs a driver jar, so we'll provide a default jar name as "sqlitejdbc-v051-native.jar". If we get fancy later, we can provide some mechanisms to pre-populate the path to the local version of that jar, but for now we'll assume the user will be able to know where their jar is located and set it appropriately in the driver definition. (Yes, we'll talk about the "fancier" way to do this automatically later.)

Beyond that, we need to get a few key bits of information about the driver. Based on the documentation for SQLite, it appears that we require the following property values:

- Driver Class: org.sqlite.JDBC

- JDBC URL: jdbc:sqlite:test.db

Easy enough, right? Well, we also require a few other things for a standard driver definition:

- Vendor: SQLite

- Version: 3.5.9

- Database name: TEST (we can extrapolate this from the sample URL)

- User ID: (not applicable, so we just leave it blank)

With these basic bits and pieces, we have defined our driver template! Whew. Took a bit of work though, I know.

That said, we now have reusable bits we can take into the next part of this process, which is creating a connection profile that can use our new driver definition and driver template.

At this point we're just laying the ground work. You can find a zip with the plug-ins created during this exercise

here.

So next time we'll look at creating a basic connection profile that can actually use these bits!

--Fitz

This year in the Data Tools track we have a tutorial coming up and bunch of cool talks from a number of different directions.

This year in the Data Tools track we have a tutorial coming up and bunch of cool talks from a number of different directions.![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=af087fee-f945-442c-8eea-58e7bb8013e8)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=819b72bc-fb32-4879-8744-22f8995d3353)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=e9238c05-453b-4c0e-9392-8138d64713c0)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=75724eee-b928-440d-8b8d-8171e6003b8e)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=9b63a726-a5fc-4d03-b8cc-4ad3f156d683)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=bd3cfe84-6e73-446a-8d98-b02addfaaecf)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=b142a8a7-137d-4d2f-881d-1169872317a0)